MCP Servers: The USB-C Moment for AI Agents

Model Context Protocol (MCP) is what happens when AI gets a universal connector — think USB-C - but for intelligent systems. It defines a simple client-server protocol that lets AI models tap into tools, data sources, and even complex workflows through lightweight, discoverable, and standardized interfaces.

This piece offers an overview of what MCP is, how it works, why it matters for AI development, and the current state of its adoption—equipping you with both conceptual understanding and practical context.

At its core, MCP (Model Context Protocol) defines a consistent way for AI systems to talk to external tools and data sources using a standardized protocol. Think of it as an interface spec that decouples AI agents from the systems they interact with. Instead of hardcoding each integration, developers define a server that exposes functionality in a known format, and AI clients (like Claude, ChatGPT, or a custom assistant) connect via a local or remote stream using JSON-RPC.

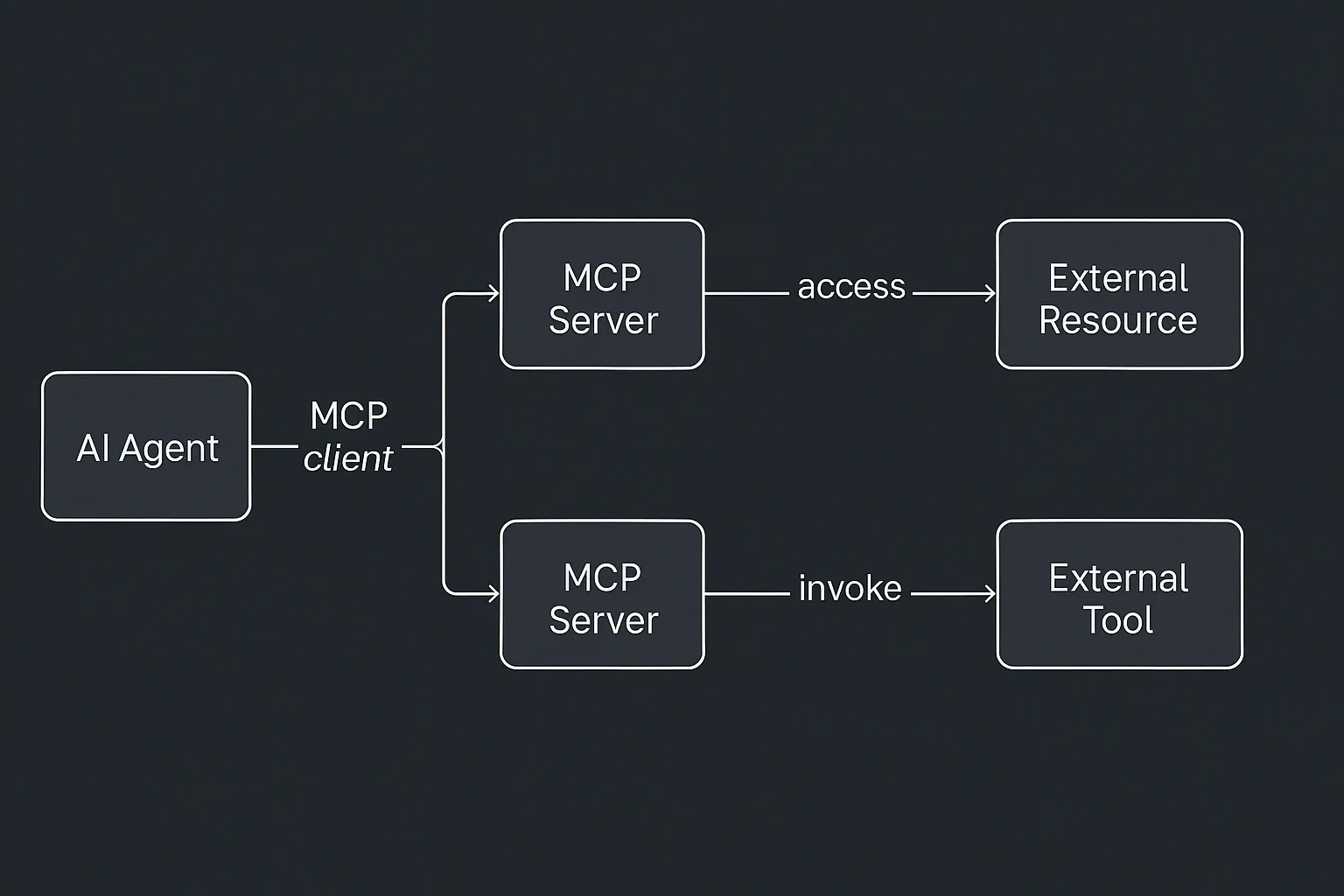

The protocol revolves around a client-server model:

- The MCP Client lives inside the AI application. It handles connections, capability discovery, and request routing.

- The MCP Server is a standalone program (often a microservice or container) that exposes specific functions (“tools”), data sources (“resources”), and instruction templates (“prompts”) in a format the client can understand.

When the AI agent needs to do something—say, look up a file, query a database, or invoke an external service—it uses the client to send a structured request to the appropriate server. That server executes the logic (like querying an API or scraping a document), and sends the result back to the client, which injects it into the AI’s context.

This separation has powerful implications. First, it abstracts away the complexity of external systems from the AI model. Second, it introduces a reusable, discoverable layer between AI logic and business logic. And third, it enables safety features like controlled access, authentication, and sandboxing—critical when models are allowed to act on external systems.

MCP servers turn isolated AI models into connected, capable systems. By exposing structured context (via resources), actionable capabilities (via tools), and strategic guidance (via prompts), they give AI models the grounding and affordances needed to actually deliver value in real-world applications.

Why It Matters

Most AI agents today suffer from the same fatal flaw: they don’t do much. Sure, they can answer questions or write copy—but when it comes to taking action (querying a database, sending an email, booking a meeting), they need help.

MCP changes this. It equips AI with an interface layer to external systems, allowing agents to reason over live data and take meaningful actions. That turns them from passive advisors into active participants in workflows.

Anatomy of an MCP Server

Each server exposes three core things:

- Tools — Functions the model can invoke (like

send_email,run_query) - Resources — Read-only data the model can load into context (files, records)

- Prompts — Templates or examples that help the model use the tool effectively

This structure gives the AI a highly modular, inspectable environment. Tools can be scoped and versioned. Resources can be updated in real time. Prompts can carry domain-specific instructions that standardize behavior across models.

Plug-and-Play Interoperability

MCP is open and model-agnostic. That means:

- One GitHub MCP server can work with Claude, ChatGPT, or any other agent.

- One developer can build a connector once, and every AI model can use it.

- Teams can swap out or chain tools without hard dependencies.

This design encourages a “write once, serve many” approach.

What’s Already Happening

Since its open-source release by Anthropic in late 2024, MCP has rapidly gained traction across the AI industry:

- OpenAI: In March 2025, OpenAI announced support for MCP across its products, including the ChatGPT desktop app and Agents SDK.

- Microsoft: Collaborating with Anthropic, Microsoft introduced a C# SDK for MCP, facilitating integration with .NET applications.

- Google Cloud: At Google Cloud Next 2025, Google unveiled “Agentspace” and the “Agent2Agent” (A2A) protocol.

- Azure AI: Microsoft’s Azure AI Agent Service now supports MCP.

- Enterprise Adoption: Companies like Block, Apollo, and Sourcegraph have integrated MCP into their systems.

- Open-Source Ecosystem: The MCP community has developed over 300 open-source MCP servers.

Developer Power Move

As a builder, you can now:

- Add new skills to your agent by running a Docker container.

- Write your own MCP server in Python, JS, or C#—SDKs exist for all major stacks.

- Host connectors remotely or locally, on Docker, Kubernetes, or even Cloudflare Workers.

MCP isn’t another dev tool—it’s a design pattern for composable AI.

Strategic Implications

- Standardization → Ecosystem: Just like HTTP created the web, MCP is creating a shared AI interface layer.

- Composable Agents: One agent’s output becomes another agent’s context, via MCP resources.

- New Categories: Entire products are emerging as “agent hubs” or “MCP marketplaces.”

What will you build?

If you’re building AI tools in 2025, don’t hardcode — build an MCP server. MCP gives your agent the ability to act, scale, and plug into a broader ecosystem.

Check out these starting points: